Projects in Social Robots

A social robot is a physically embodied entity immersed in a complex, dynamic, and social environment, being capable to perceive and understand others, including humans, and sufficiently empowered to behave in a manner conducive to its own goals and those of its community by engaging in social interactions with them. (an adaptation of B. Duffy’s definition)

The ANIMATAS (MSCA – ITN – 2017 – 765955 2) project was a H2020 Marie Sklodowska Curie European Training Network funded by Horizon 2020 (the European Union’s Framework Programme for Research and Innovation), coordinated by Sorbonne Université – SU (Paris, France). The ANIMATAS project focused on the following objectives:

- Exploration of the fundamental questions on the perception by people of the interconnections between robots or virtual characters’ appearances and behaviours.

2. Development of new social learning mechanisms that can deal with different types of human intervention and allow robots and virtual characters to learn in an unconstrained manner.

3. Development of new approaches for robots and virtual characters’ personalised adaptation to human users in unstructured and dynamically evolving social interactions.

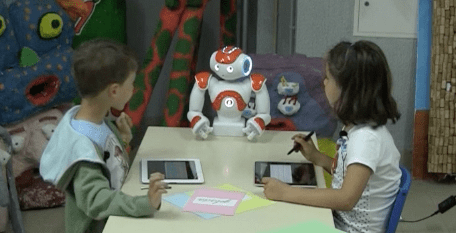

The Emote project (EMbOdied-perceptive Tutors for Empathy-based learning), was an European funded (FP7- 317923) research project exploring empathy in virtual and robotic tutors. The project started in December 2012 and ended in March 2016.

In EMOTE we researched how robot tutors that respond to learners can offer a new and exciting approach to learning. Human teachers respond to a myriad of cues and a key aspect for the human teacher-learner experience is empathy. Now, with the advent of social robotics, robot tutors will be able to do just that. We developed a new generation of robot tutors that empathise with children. We developed two applications to use with our robot tutors, in paricular a sustainable city multi-player game, where children learn about sustainability in the interaction with the empathic tutor (using the NAO robot).